Hack2023 2nd Prize: Difference between revisions

No edit summary |

No edit summary |

||

| (2 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

<p class="left" style="font-size:26px;"> ←''[[ETSI_-_LF_-_OCP_-_MEC_Hackathon_2023|MEC Hackathon 2023]]''<p> | |||

<br> | |||

{{DISPLAYTITLE:<span style="position: absolute; clip: rect(1px 1px 1px 1px); clip: rect(1px, 1px, 1px, 1px);">{{FULLPAGENAME}}</span>}} | {{DISPLAYTITLE:<span style="position: absolute; clip: rect(1px 1px 1px 1px); clip: rect(1px, 1px, 1px, 1px);">{{FULLPAGENAME}}</span>}} | ||

| Line 17: | Line 19: | ||

<div class="panel panel-default"> | <div class="panel panel-default"> | ||

<div class="panel-body"> | <div class="panel-body"> | ||

[[File:Sheikak1.png|300px|class=img-responsive]] | |||

<p>from '''Google LLC''' and '''Peking University'''</p> | |||

* Qi Tang - Senior Hardware Engineer - Google | * Qi Tang - Senior Hardware Engineer - Google | ||

* Yi Han - Google | * Yi Han - Google | ||

| Line 71: | Line 74: | ||

[[File:Sheikah-archi.png|600px|center|top|class=img-responsive]] | [[File:Sheikah-archi.png|600px|center|top|class=img-responsive]] | ||

[[File:Sheikah-map.gif|300px|center|top|class=img-responsive]] | |||

<br> | <br> | ||

<!-- | <!-- | ||

<div class="flex-row row"> | <div class="flex-row row"> | ||

<div class="col-xs-12 col-md-6 col-lg-7"> | <div class="col-xs-12 col-md-6 col-lg-7"> | ||

<div class="panel panel-default"> | <div class="panel panel-default"> | ||

| Line 84: | Line 85: | ||

[[File:Sheikah-archi.png|500px|center|top|class=img-responsive]] | [[File:Sheikah-archi.png|500px|center|top|class=img-responsive]] | ||

</div> | </div> | ||

<div class="col-xs-12 col-md-6 col-lg-5"> | <div class="col-xs-12 col-md-6 col-lg-5"> | ||

| Line 96: | Line 93: | ||

</div> | </div> | ||

</div><! | </div><! | ||

</div | </div> | ||

</div> | </div> | ||

--> | |||

<br><br> | <br><br> | ||

| Line 109: | Line 106: | ||

https://github.com/Dako2/sheikah-tower.git | https://github.com/Dako2/sheikah-tower.git | ||

= Project Videos = | = Project Videos = | ||

{{#evu:https://www.youtube.com/watch?v=zmEYpDqo0FU | {{#evu:https://www.youtube.com/watch?v=zmEYpDqo0FU | ||

|alignment=inline | |alignment=inline | ||

|dimensions="120" | |dimensions="120" | ||

}} | }} | ||

Latest revision as of 10:05, 28 March 2024

2nd Prize Award

Project Sheikah Tower

AI Assistants powered by local people

Enhance usefulness based on time and place

Team

Introduction

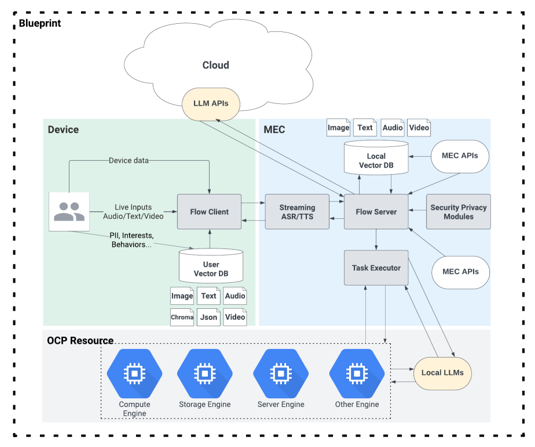

Edge Native Real-time Voice AI Assistant provides local-info enhanced language and speech services (e.g. real-time translation and AI voice-bot) using the state-of-the-art AI models.

The key features are the real-time streaming services by edge computing. We will also leverage the ETSI MEC APIs to fine-tune or prompt the LLM with available local information (such as dialects, geographics, local culture) to provide faster and more useful user contents. We will demonstrate the advantages of edge computing compared to traditional on-device and cloud based services. The end user interfaces can be mobile devices, wearables, IoTs, robots and/or vehicles.

Main Features:

● Users can easily find the nearby virtual assistants from a map view by leveraging MEC APIs

● Local AI virtual assistant indexed by ZoneID and CellID

● “Local” means the Vector Database and Prompts are location dependent

● The Vector database and Prompts are uploaded and designed by the local business owners;

● The virtual assistants can be sophisticated / the-state-of-art AI models serving as a real-time language interpreter (For example, Meta’s latest Speech-to-Speech Massive Language models) which also can be found by the user from the map (as long as it is within the same ZoneID or CellID)

● The finding range can be also flexible: for example, indoor localization information from MEC APIs which is used serving for a museum exhibit tour (room specific) or a city tour based on the user’s device GPS signal

● The app is agnostic to various user end devices because computation, memory and location information is not on device per se. We choose iOS for demonstration purpose only.

Software resources

• Project repository

https://github.com/Dako2/sheikah-tower.git